Sensing Cloud Eye Control

This weekend, April 22–23, the artist collective Cloud Eye Control will take over areas throughout the museum as part of SFMOMA’s Performance All Ages programming. The group’s work involves performance, animation, video projection, and interactive technology. The activation, titled Rituals for a Nervous Body, features scenes from the group’s production Half Life, created in response to the 2011 Fukushima Daiichi nuclear disaster in Japan, and will be ongoing all day in the Gina and Stuart Peterson White Box. Performance elements in other spaces will include “psychic armor building workshops.”

Photo: Christian Davies

“When SFMOMA extended to us the invitation to conceive work for the museum space, as opposed to our usual theatrical space,” says Cloud Eye Control member Chi-wang Yang, “we started thinking specifically about different kinds of technologies that are changing how we think about our bodies in an environment—given that San Francisco is a very tech-centric city, and given our own existing interest in technology.”

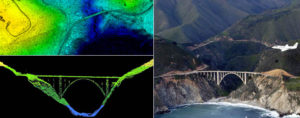

One item on the wish list they submitted: access to one of today’s most cutting-edge and topical technologies, LiDAR. This is the 3D sensor technology that enables driverless cars, bridge-inspection drones, and a host of other applications by generating point-cloud mappings of space in real time. It is a “view” typically only experienced by machines, and it’s about to be ubiquitous. But not yet.

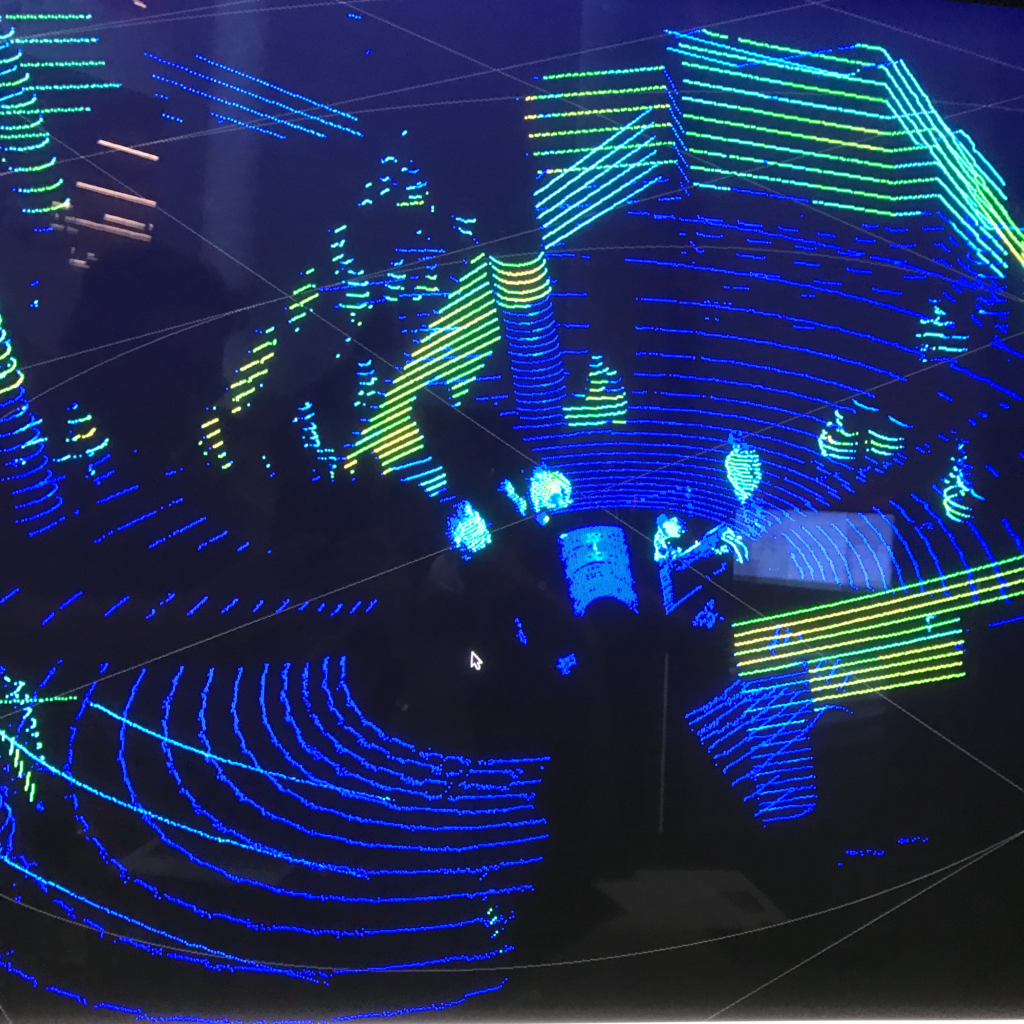

“LiDAR is extremely rare in art contexts—I know of only a few instances where it’s been deployed before, for instance a handful of music videos—because it’s expensive and hard to come by,” notes Yang. “So we’re very excited that the museum made the connection for us with Velodyne, the company that makes it.” This weekend, visitors in the Evelyn and Walter Haas, Jr. Atrium will find themselves LiDAR-mapped on large TV screens, in colors that are determined by the shape and reflectivity of their clothing.

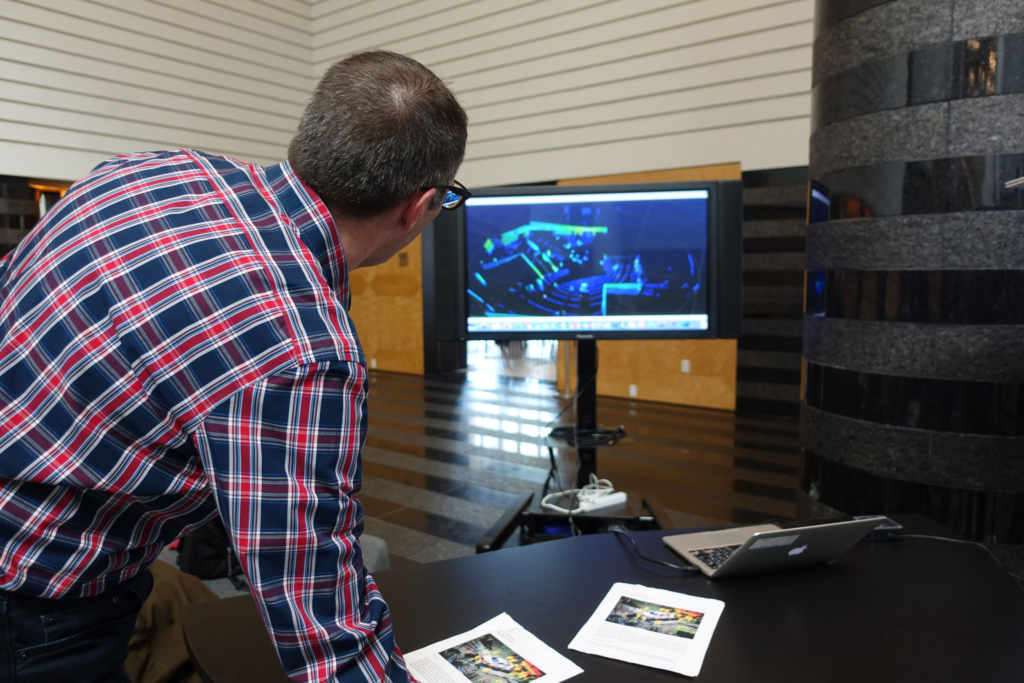

Testing the LiDAR technology in SFMOMA’s Botta Atrium; photo: Lindsey Westbrook

Cloud Eye Control, says Yang, has a fundamental curiosity about information and technology in modern life. Technology has already completely changed how we understand geography, our bodies, even our identities. And now automated vehicles are soon going to be everywhere, which will cause even more radical shifts in how we understand and occupy our environment. Machines are going to be autonomously co-present with us, sharing roads, sharing public and private spaces.

This new mapping approach is incredibly powerful and cutting edge, Yang says, but unless you frequent trade shows for aerial drones, trucks and tractors, cleaning machines, and robots (or watch a lot of online demos), you’re probably utterly unfamiliar with it. “So this a great opportunity for us, as artists, to bring it into the museum and give the public a way to interact with something that is about to change all of our lives.”

Around the museum will be wandering a cast of “psychic blobs,” a concept Yang has been experimenting with for some time. The psychic blobs are people costumed head to toe in shaggy suits made of a multitude of plastics, inspired by military ghillie suits. “The commonly accepted idea is that technology creates a rational, linear understanding of the world,” observes Yang. “Data and information are supposed to be neutral, literal. Yet the more we build this intense bond with data in our daily lives, the more we veer into extraordinary abstraction, and also connect to our emotional interior selves in curious ways.” For him, the psychic blobs are manifestations of our own unruly, emotional concepts of our bodies.

Chi-wang Yang, Psychic blob costume; courtesy Cloud Eye Control

In the Atrium, the psychic blobs will have a dual function, also showing up as anomalies on the LiDAR screen. From an engineering perspective, says Jeff Wuendry of Velodyne, their variously colored and reflective materials are interesting because they’ll register differently with the technology than regular visitors. He agrees with Yang that this is a welcome opportunity to bring LiDAR to the broader public. “With the advent of driverless driving, there is general hesitation about a car that can see. This weekend is about demonstrating to people that a sensor can indeed do that, and hopefully make them more comfortable around automation. We’re looking forward to presenting the technology behind autonomous autos in a different light.”