Team Pixelmasher

On October 30, 2014, SFMOMA and Stamen Design hosted Art + Data Day at the new Gray Area Art and Technology Theater in San Francisco. The event was formatted as an “unhackathon” focusing on collaboration and problem solving — rather than competition and speed — as a way of testing an alpha version of SFMOMA’s new API. Since public sharing is a focus of SFMOMA’s API, all code created during the event has been posted to GitHub, and all of the projects will be summarized on SFMOMA Lab in the coming weeks, beginning with Team Pixelmasher’s project.

Team Pixelmasher formed around the idea of visualizing artworks from the SFMOMA collection, in aggregate and in contrast, to see what (if any) patterns emerged. Team members Micah Elizabeth Scott (a creative technologist exploring interactions between technology, art, and humans) and Nadav Hochman (a doctoral student using computational methods for visually analyzing large-scale image data) quickly focused in on this idea of aggregating and analyzing the images through the collection API. They were joined by Ian Smith-Heisters, a design technologist at Stamen; Keir Winesmith, head of web and digital platforms at SFMOMA; and myself, web production coordinator at SFMOMA. Many members of the group had previously written scripts to process and visualize large numbers of images, and having ready access to this code was a valuable head start to the day, considering the limited time we had. I recommend that anyone planning a similar event encourage participants to bring and use familiar tools, libraries, and patterns.

We started by identifying thematic buckets of artworks we could focus on. We first thought of looking at a specific year and landed on 1969 — a historic year that included the moon landing and various other important happenings in San Francisco. However, we found that restricting ourselves to a single year would not yield enough images for our purposes, as only a percentage of the artworks contained in the collection API have associated images. Slightly changing course, we decided to focus on artworks from the 1970s, since about 70% of the photographs from that decade have representative images available in the collection API.

Micah turned to a script she had previously written that downloads, processes, and overlays large numbers of images from the internet, creating a kind of virtual long exposure. Next, she removed some of the “noise” to make the more unique aspects of the amalgamation visible.

1970s photography long exposure

1970s photography high-pass detail

After visualizing the set of 1970s artworks in this fashion, she did the same with artworks from the 1890s. The result was surprisingly similar, since many of artworks from the two decades in question — the 1890s and the 1970s — are black and white photographs. In the end, the resulting images from the 1970s are striking in and of themselves, and present a distinct view of a decade’s worth of artworks from SFMOMA’s collection.

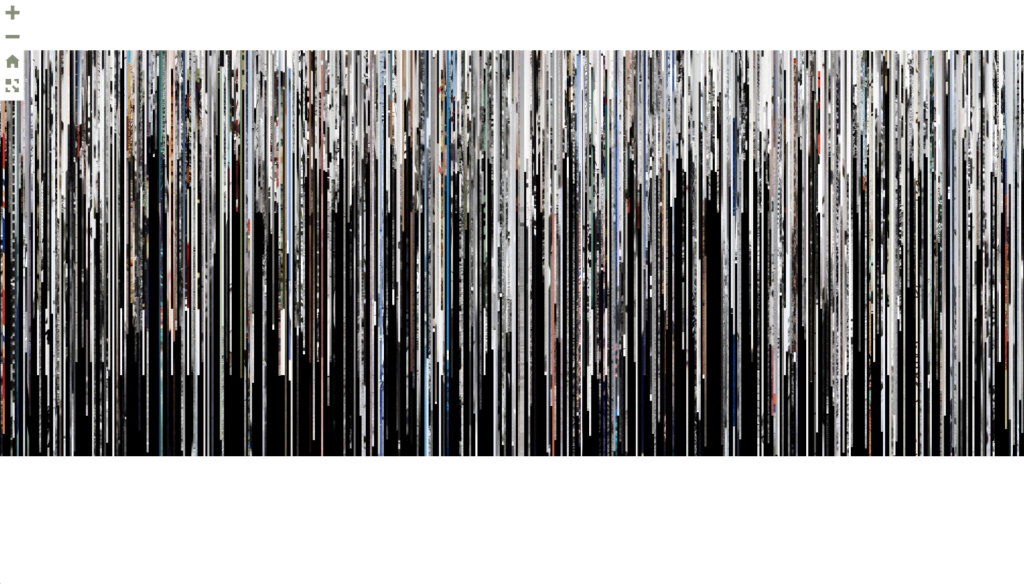

Creating two other ways to visualize the photographs in aggregate and contrast, Nadav utilized code he wrote previously to analyze large numbers of images. He first took a five-pixel slice out of the center of each image in the set. Next, he laid out each slice horizontally.

1970s photography vertical slice

The result is a fascinating glimpse of every image, which resembles the spines of a record collection. You can see stripes of bright color among swathes of grayscale, and chunks of works that are similar in both color palette and size. The varying heights of the samples represent the size of the JPG or PNG file that was available via the API, rather than the physical size of the photograph. Currently, the images in the API are representational, rather than high-resolution, and they’re not standardized in file size or format. SFMOMA, like most museums, would like to include high-resolution images of every collection artwork in their API; however, that is challenging for a museum with limited archiving resources and a large, quickly growing collection.

The next visualization Nadav created presents a totally different view. Nadav’s script analyzed the average brightness and contrast of 2,270 photographs from the 1970s, and the photographs were then plotted across a canvas. Brightness determined how far from the center of the canvas a photograph was positioned: the brighter the photograph, the farther it was from the center. Likewise, contrast within the photograph determined where it appeared on the canvas: contrast increased counterclockwise from 90 degrees.

1970s photography radial I-mean contrast

Ian created a visualization that displays every 1970s photograph from the SFMOMA collection at 60 frames per second. Each image is chosen randomly, and the resulting animation loops endlessly. The images are not cropped, so as they fly by you can see the variations in size and aspect ratio at the edges of the screen. The color images jump out amidst the majority of black and white photographs. Every so often, one image imprints and lingers in your mind even after many others have flashed by; it’s a transfixing effect.

Ian also built a stop functionality into the application, turning it into a kind of game to test your reflexes. If an image catches your eye, will you be fast enough to hit the spacebar to stop the animation and look closer? Multiple people mentioned experiencing a physical reaction (recognition, excitement, etc.) to an image as it flashed by — inspiring the idea of future research into biometrically capturing this reaction, perhaps by measuring the viewer’s pulse or heart rate.

Video of the application in action. Note: This video is not playing back at 60 frames per second.

Ian’s application does one thing very simply: it shows each image in a set, one after another, randomly selected. Interpretation and pattern-seeking is left entirely up to the viewer’s brain. The developers in the room found this format particularly mesmerizing, perhaps because they’re hardwired to discover logic and patterns in the flashing images.

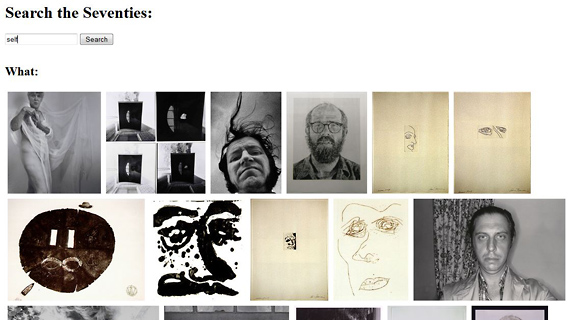

The final project to come out of team Pixelmasher was Search the Seventies, a basic search engine focused on artworks created in the 1970s. After experiencing these beautiful, abstract ways of essentially looking at all of the artworks from the 1970s in SFMOMA’s collection at once, I found I wanted a way to leisurely browse through those same images. Keir had previously written a search engine that sends keywords to the search endpoint of the API and searches the artwork title field for the query. (Expanding the API’s search functionality to other fields is underway.) His search engine returns a list of links with the query string in the title, listed in order of relevance.

I modified Keir’s search engine to search only artworks created in the 1970s, taking a query and returning only the images of artworks with that query in the title. The artwork title appears on mouseover. Searching a specific time period for a keyword and returning a page full of images is a simple, but also satisfying way to look at images at your own pace.

Search the Seventies

Conclusion

Using the collection API, Team Pixelmasher cranked out four creative ways of looking at one set of artworks from the SFMOMA collection in a matter of hours. It was never the main goal of Art + Data Day to walk away with working code for fully-fleshed out applications, but this group’s skills and experience allowed them to do just that. The API also performed incredibly well, responding to multiple concurrent calls (we were basically saying, “Give us all of the images and right now, please”). At first we focused on too narrow a subset of the collection to create a useful visualization, but once we expanded the time frame, plenty of visual information was presented. Beyond focusing on just a decade’s worth of artwork, we thought a familiarity with SFMOMA’s collection would be helpful when trying to define a particular set of works to interrogate. Another issue that this group’s work raised is one that has been discussed and studied extensively since the idea of creating an API was first suggested: image copyright. There are plenty of people who will be drawn to the vast repository of artwork images this API contains, Micah, Nadav, and Ian included. SFMOMA’s approach to copyright for the API, and for the images in particular, warrants its own story, which will be published on SFMOMA Lab in the coming months.